A virtual machine is more similar to a physical computer than you may think. It has a central processing unit (CPU), physical or network-based storage space, and the ability to connect to the internet.

There’s a subtle difference, though. A VM is a software-based, virtual computer that exists only as code. While you can’t see or feel a virtual machine, these virtual versions of computers pack a punch.

Creating a virtualized instance of a physical computer is called virtualization — we’ll learn more about this in the next section. Read on to understand the ins and outs of virtual machines.

-

Navigate This Article:

Understanding Virtual Machines

As promised, let’s explore virtualization. It’s the process of creating a virtualized instance of a physical computer with dedicated CPU power, memory, and storage.

These resources are provided by either a physical host computer (you can also create a VM from a personal computer) or a remote server. A VM exists only as a partition of the system, each partition independent.

Some important applications of virtual machines include:

- Developing and deploying applications to the cloud.

- Testing new operating systems (OSes), including beta releases.

- Creating independent virtual environments with dedicated resources to run development testing (DevTest) scenarios.

- Backing up your current operating system.

- Installing an older OS version to run an old application or access potentially infected (virus-infected) data.

- Running applications or software on operating systems they weren’t meant for.

What’s astonishing is that virtual instances exist only as computer files (called images) yet behave like an actual computer! Studying the evolution of virtualization technology, types of virtualization, and hypervisors will aid your understanding of virtual machines.

Evolution of Virtualization Technology

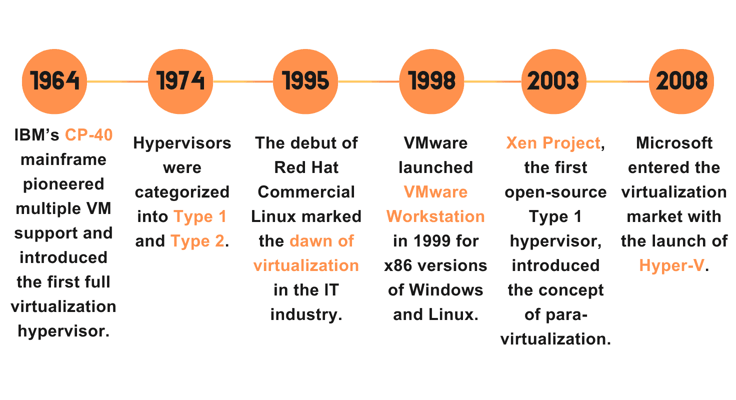

Virtualization technology has a checkered history, with its roots in the 1960s. Now, our goal isn’t to provide a history lesson — understanding its evolution will help you (IT administrators) better implement VMs.

IBM designed a mainframe called CP-40 in 1964. It was the first system to support multiple VMs for time-sharing production work and offered the first hypervisor for full virtualization.

Ten years later, the hypervisor was classified into Type 1 (hypervisors that run on bare metal) and Type 2 (hypervisors that run on top of an OS).

Virtualization began its foray into the IT industry in the 1990s, with the introduction of Red Hat Commercial Linux (it had an OS with a Linux kernel) in 1995.

VMware was founded in 1998, and VMware Workstation, which ran on the popular x86 versions of Windows and Linux operating systems, was launched in 1999.

Virtualization took off with enterprise-level solutions in the 2000s. In 2003, Xen Project, the first open-source Type 1 hypervisor, introduced the concept of para-virtualization.

Not one to be left behind, Microsoft made a significant market move with Hyper-V in 2008. Virtualization in modern IT focuses heavily on cloud capabilities, and it’ll be interesting to see what the future has in store.

Types of Virtualization: Full Virtualization vs. Para-Virtualization

I mentioned the terms “full virtualization” and “para-virtualization” in the previous section.

Introduced by IBM in 1966, full virtualization is the process of complete isolation of a virtual instance, where the guest OS assumes full control of allocated resources. It uses binary translation and a direct approach and can support just about any operating system on the market.

The para-virtualization technique alters the OS kernel to take advantage of hypercalls to compute instructions at compile time. Compared to full virtualization, it’s more secure, faster, and streamlined. However, it’s less portable, offers partial isolation from hardware, and supports select operating systems only.

Hypervisors: Key Components of Virtualization

If you think operating systems are cool, wait till you learn about hypervisors.

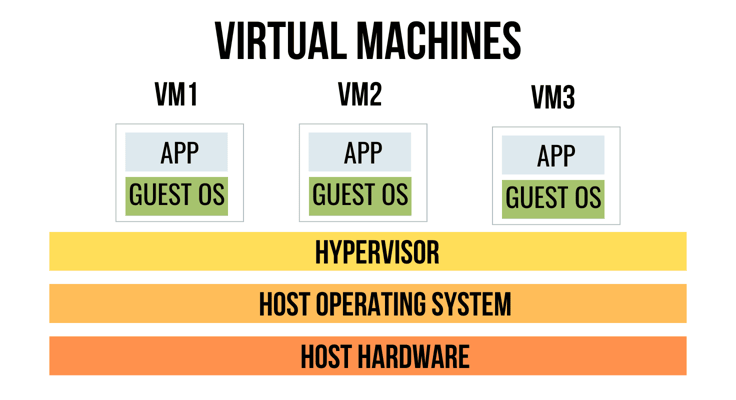

Hypervisors, also known as Virtual Machine Managers (VMMs), are at the center of the virtualization process, as they enable the creation and management of VMs.

Each VM is assigned an independent guest operating system and dedicated resources. Different operating system types can be run concurrently on virtual machines.

Mind you, hypervisors don’t really replace host operating systems — you can say they complement them instead. They’re an invaluable tool for virtualization and cloud computing, as they enhance resource utilization, system flexibility, and isolation (which is perfect for testing environments).

How Virtual Machines Work

You now have a decent understanding of how virtual machines actually work.

Let’s enhance your knowledge by exploring the workings of the virtualization layer and guest operating systems, the allocation of hardware resources to guest OSes, virtual networking and storage, and snapshot and cloning features.

Virtualization Layer and Guest OS

All virtual machines exist within the virtualization layer, which is facilitated by a hypervisor (VMM) and enables numerous virtual machines to coexist in isolation on a single physical computer system.

You can think of it as a layer separating the host operating system and physical hardware from virtual machines.

The hypervisor allocates physical hardware resources to these virtual machines, each of which operates a guest operating system that is either partially isolated (para-virtualization) or fully isolated (full virtualization).

Allocation of Hardware Resources

The hypervisor is responsible for fairly allocating hardware resources to virtual machines. Type 1 hypervisors have direct access to these resources, while Type 2 hypervisors rely on the host OS for resource allocation, creating additional overhead.

The hypervisor allocates an appropriate share of CPU resources, memory, storage, and network bandwidth to each VM. There’s no monopoly in resource allocation — policies like equal share, reservation, and priority apply.

Virtual Networking and Storage

Virtual machines are meant to be partially or fully isolated, but virtual networking technology can establish a connection between VMs, virtual servers, and other assets in a virtualized environment.

Data communication is made possible through private IPs, enabling several use cases, including complex testing and development, internet access, and performance optimization.

Virtual machines are like physical computers in many ways — they require storage space for data, system files, and programs. Each VM has its own virtual drive, and a hypervisor can assign either physical or network-based storage to it.

Virtual machines can also take advantage of storage resources from shared storage pools (they generally contain data like virtual machine disk files (VMDF)). To ensure VM data is preserved, regular backups are stored on storage devices or hard disks.

Snapshot and Cloning Features

Snapshots and cloning are crucial development features in virtual environments.

A snapshot captures the real-time state of a VM and enables developers to revert to that precise state if necessary.

Snapshots are particularly useful for backup and recovery, testing and troubleshooting, and software development.

As the name suggests, cloning refers to the process of creating a duplicate of a VM instance — while it shares the same properties, it’s independent and unique.

Full cloning refers to creating an exact duplicate of a VM, while linked cloning creates a space-efficient duplicate that shares virtual disks but possesses its own configuration files.

Benefits

Virtualization is integral to cloud computing and makes it possible to maximize physical computer hardware resources. We’ve made clear the many applications of virtual machines, and now it’s time to dive into the benefits of implementing virtualization technology.

The benefits of virtual machines make these applications possible:

- Resource isolation: Each virtual machine operates in isolation and has a dedicated set of resources. Failures and software conflicts in one virtual machine environment won’t impact other VM instances, leading to greater system stability and security.

- Server consolidation and resource optimization: Server consolidation into multiple virtual machines can enhance resource utilization, as VMs efficiently share resources and ensure each server performs at peak capacity.

- Scalability and flexibility: VM instances offer great scalability and flexibility when compared to traditional physical infrastructure. You can simply capture or duplicate the state of a VM instance and move it to other servers and data centers at will.

- Cost savings: Instead of repeatedly acquiring physical computers and servers when you run out of resources, organizations can employ virtualization to maximize existing resources and purchase new equipment only when absolutely necessary. VMs also enable you to extend the lifespan of old software and data (we touched on this earlier).

- Energy efficiency: Similarly, by choosing not to purchase additional hardware equipment for computation and going the VM way, energy efficiency is maximized, too. Less equipment used at nearly full potential means less energy will be expended in the grand scheme.

- Improved disaster recovery: Snapshots allow you to capture the current state of a virtual machine, use it for testing and development, and restore it to its previous state if need be. You can think of this as a simplified backup and recovery point mechanism (cloning plays a similar role). Essentially, VMs streamline disaster recovery testing and reduce its associated costs.

- High availability: Virtual machines deliver availability to applications independently of the host operating system, automatically reducing application downtime. They also optimize resource allocation and simplify provisioning, both of which lead to high availability.

Imagine being able to run a Linux virtual machine on a Windows OS host and older Windows OS versions on a Windows 10 or Windows 11 operating system host — virtualization enables this and much more. Let’s move on to the categories of virtual machines.

Types

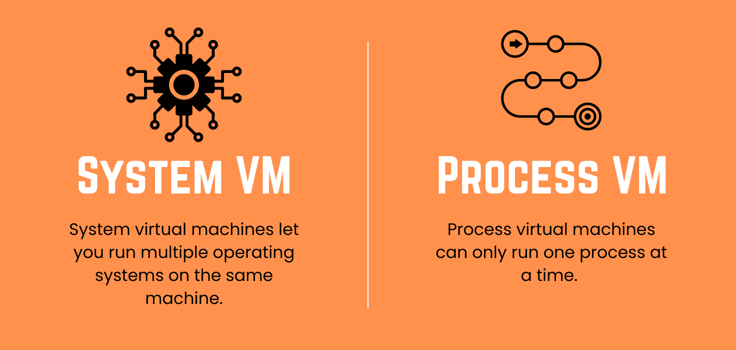

Not all virtual machines run independent operating systems. If you’ve ever coded in Java, for example, you’ve heard of Java virtual machine (JVM). It’s a run-time engine that compiles and executes Java programs.

JVM runs a single process at a time and operates within an existing operating system. You must be wondering which VM category JVM falls under (hint: it runs processes), so without wasting any time, let’s explore both.

System Virtual Machines

So far, your idea of a VM is a system virtual machine — a virtualized, isolated environment with dedicated hardware resources and a guest operating system.

Each virtual machine is a complete system. System virtual machines are useful in cases where you have to run multiple operating systems in parallel and complete isolation on the same system.

Popular system virtual machine solutions include VMware, Xen, VirtualBox, QEMO, Parallels, and Windows Virtual PC.

Process Virtual Machines

Process virtual machines are also referred to as application virtual machines. They only run one process at a time — they’re created when a process starts and destroyed when it ends.

Unlike system virtual machines, process virtual machines operate on the host operating system, provide platform-independent programming capabilities, and abstract away operating system and hardware details.

Popular process virtual machines include JVM and Wine.

Use Cases

Virtual machines are a powerful tool for numerous tasks, as they enable multiple isolated virtualized environments to exist on a single system, provide efficient resource management, and afford immense flexibility and scalability.

We’ve briefly touched upon the applications of virtual machines, and now it’s time to expand on them.

Development and Testing Environments

Virtual machines are invaluable for development and testing. By setting up isolated virtual machines on a local workstation, developers can run the same code on different VMs with varying configurations and operating systems.

They can also take advantage of features like snapshots and backups to streamline software development and for troubleshooting and backup and recovery.

If the VM environments are connected to a cloud server, the developer can directly deploy the software to other users for testing purposes.

Speaking of testing, VMs allow you to test beta versions of software, validate test scripts, and ensure everything is in working order before making the final version available to users.

Server Virtualization

Server virtualization (server consolidation) simplifies server management by eliminating the need for several large servers with sub-par performance.

This technique maximizes server efficiency.

For context, consolidating numerous VMs on a single server optimizes resource use, allows workloads to be deployed quickly, increases system performance (through dynamic resource allocation), enhances system reliability, eliminates server sprawl, and reduces operational costs.

Cloud Computing Infrastructure

If you understand how cloud computing works, you’ll realize virtualization techniques are at the heart of cloud computing infrastructure.

Dynamic provisioning, scaling, and de-provisioning of virtual resources based on demand benefits cloud providers, as it enables the efficient allocation of resources.

Since VM environments operate independently, they add another layer of security — the failure of one virtual machine instance won’t affect the entire operation of a cloud provider.

Additionally, VMs can be easily migrated between physical servers, enabling easy maintenance, effective load balancing, backups, and disaster recovery.

Desktop Virtualization (VDI)

Let’s imagine you’re traveling to Thailand for a business trip and forgot to pack your laptop.

Instead of panic buying a cheap laptop upon arrival, what if I told you that you could access your desktop environment with all of its applications from the computer system in your hotel lobby?

Virtual desktop infrastructure (VDI) enables this.

In this model, you own a dedicated virtual machine with an OS environment that’s hosted in a data center. You can access the desktop image at will and enjoy a local experience.

Software Testing and Debugging

Virtual machines allow you to seamlessly run software in different VM environments, leading to more efficient testing and debugging.

Testing and debugging in different OS environments with varying configurations would be tedious if you had to manually install the software or use multiple devices.

More importantly, you can isolate and debug without affecting the host operating system or other virtual machines on the same device.

You can also take snapshots, which save different versions of your virtual setup, so you can easily go back to them if something goes wrong during troubleshooting or testing.

Factors to Consider When Using Virtual Machines

While virtual machines are advantageous and add flavor to your IT infrastructure, they may not suit all businesses.

For example, suppose you consistently use resource-intensive applications (especially those that rely on GPU). In that case, virtualization may not yield the best results — you could face issues when running them on virtualized instances.

Games that require serious power or graphics may also not respond well to VMs (virtual machines are used in the gaming industry, especially during early software development and testing). Here are some factors to consider when using virtual machines.

Hardware Requirements

While server consolidation is recommended, you need to ensure the hardware capacity of your server is sufficient to support the desired number of virtual machines.

The last thing you need is to allocate too few resources to VM instances. For example, If your employees run out of resources, you’ll have to purchase more infrastructure.

Performance Considerations

Similarly, if each VM instance is allocated fewer resources than expected, you’ll have to adjust your performance expectations.

For example, if a particular server is built to support 100 virtualized environments at designated performance levels and you create 120 VM instances, the performance of each VM will be impacted.

Security Measures

Some challenges come with implementing VMs:

- Uneven resource distribution could affect some VMs, as they could be starved of resources.

- Performance overhead is another issue due to virtualization layers.

- Lastly, handling several VMs isn’t as easy as it may seem — a robust management toolkit and experience are necessary.

To protect your VM environments, you need to consider these challenges and implement a vigorous security framework.

Licensing and Compliance

To avoid compliance issues, you must consider software licensing rules. It’s easy to understand why compliance issues may arise — imagine running licensed software meant for one system on several VM instances.

While numerous software license agreements enable virtual machine implementation, some don’t, and you may have to purchase multiple license types to stay compliant. Perform due diligence!

Virtual Machine Management and Deployment

While deploying a virtual machine is easy, it may be challenging to manage multiple VMs simultaneously.

You may face issues like virtual machine sprawl (dormant VMs may pose security risks) and virtual machine escapes (attackers may jump from one unattended VM to another to access sensitive information).

Choosing the right virtualization platform should help you tackle these issues and confidently deploy new VM instances.

Choosing a Virtualization Platform

Oracle VM VirtualBox, VMware Workstation Player, Microsoft Hyper-V, and Parallels Desktop are a few of the most popular virtualization platforms.

These tools cater to specific requirements, so before buying one, look into each feature, analyze customer reviews, and compare pricing models.

- Oracle VM VirtualBox: This virtualization platform is a fascinating choice for development and testing, primarily owing to its robust hardware support and portability. You can create a VM instance on one host operating system and run it on another 64-bit host OS. It also offers features like accelerated 3D graphics, seamless windows, and shared folders.

- VMware Workstation Player: The only virtual machine platform on this list that supports a single VM environment at a time, VMware Workstation Player is a good option for managed corporate desktops, training, and learning. It’s compatible with Windows and Linux OSes and portable across VMware environments.

- Microsoft Hyper-V: This Windows-only virtualization platform allows you to create virtual devices like virtual switches and virtual hard drives and add them to your virtual machines. With Hyper-V, you can enjoy easy VM management and seamlessly move active VMs between physical servers with the live migration feature.

- Parallels Desktop: If you own a MacBook and want to run other operating systems on your device, like Windows and Linux, Parallels Desktop is the right virtualization tool for you.

It allows you to smoothly transition between Windows and macOS without restarting your device, offers superior resource management, prioritizes security, receives frequent software updates, and offers handy tools for file management, video recording, and screenshots.

To summarize, Parallels Desktop should be your go-to solution for juggling between Windows and macOS virtual environments on a single device. VirtualBox is a magnificent, free solution for cross-platform development.

Finally, Hyper-V is a convenient solution for Windows users, especially if you pre-own a Windows Pro or Windows Enterprise license. While Workstation Player offers robust features, it supports only one VM at a time and comes with a hefty price tag.

Provisioning and Configuring Virtual Machines

Setting up and running a virtual machine isn’t a piece of cake, and the process may vary depending on your virtualization platform of choice. Following these basic steps should help you stay on track:

- Check system support to ensure your computer hardware supports virtualization; You may need to enable virtualization extensions.

- Select a hypervisor that supports your operating system and matches your needs.

- Download and install the hypervisor, open it, and create a new VM environment.

- Configure the hardware settings of the VM and select an installation method.

- Install a compatible OS on the VM and all necessary drivers and software.

- Configure VM settings as required.

Voila! You’re all set to run basic operations on the virtual machine environment. We recommend reading setup guides and watching tutorials for a better understanding of the process — stick to guides and tutorials based on your platform of choice.

Monitoring and Optimization

Good VM platforms offer built-in monitoring and optimization mechanisms. For example, you could analyze insights into virtual machine health and performance and attempt to optimize them.

You could also use cost alerts and policies to monitor usage and spending and reduce VM costs. Automation is also an important theme, which we will explore in the following section.

Automation and Orchestration

Autoscaling is a crucial feature. It permits automatic scaling up or down of hardware resources to ensure uninterrupted VM performance.

For example, if a VM is idle, it’s susceptible to security risks, and another instance may better use its resources. Dynamic resource adjustment through autoscaling takes care of these issues. We also recommend automating fixes using OS scripts or commands.

All background tasks, including automation, monitoring, and alerting, are orchestrated — this basically means they’re coordinated and carried out in proper sequence.

Challenges and Limitations

Nothing in life is perfect. While virtualization technology offers numerous benefits, it has significant challenges and limitations as well.

Common virtualization challenges include performance overhead, security risks, compatibility issues, and licensing complexity.

- Performance overhead: Creating a virtualized instance to run applications can lead to performance overhead, as the instance requires a separate operating system and additional hardware resources. Running the same applications directly on the physical computer (host OS) could lead to faster performance.

- Security risks: Virtualized environments can pose security threats if they’re left idle or don’t follow best practices. Use the same security mechanisms you do for physical computers. Always monitor resource distribution and disable all unnecessary functions within VM environments.

- Compatibility issues: While virtual machines can run old operating system versions and applications, hinting at great compatibility with legacy and modern systems, you may face frustrating compatibility issues with network adapters, virtual disks, optical drives, and processors (32-bit vs. 64-bit). Most of these issues can be resolved easily — drink coffee, watch relevant tutorials, and solve them with a smile.

- Licensing complexity: Staying compliant is a top priority for any organization. Ensure you use software only for the number of VMs they’re licensed to use. You may have to buy multiple software editions to remain compliant, and while this may cost serious money, it’s worth it.

Another limitation of virtual machines is performance monitoring. While physical systems like hard drives and mainframes can be easily monitored, virtualized environments are more challenging.

Future Trends

Earlier, we talked about how current virtualization technology does not meet high-level gaming standards. Advancements in artificial intelligence (AI) and machine learning (ML) technology have enabled GPU virtualization.

The effective distribution of GPU power may see mainstream gamers embrace virtual machines (it’s already a hit in cloud gaming). This is one of the many future trends related to virtual machines that’s got me super excited! Let’s explore others.

Containerization vs. Virtual Machines

Virtual machines and containers offer a similar level of isolation but are quite different from one another.

You can think of a container as a portable, isolated software package that bundles code, runtime, system libraries and tools, and settings. They’re lightweight, efficient, and are slowly but surely replacing VMs for new deployments.

However, VMs still have a role to play, especially in legacy systems, and we’re likely to see specialized VM optimizations for workloads like performance computing and AI/ML.

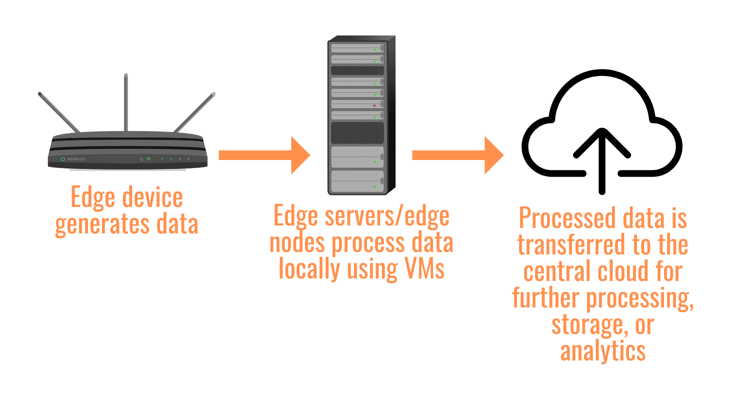

Edge Computing and Virtual Machines

Virtual machines are an important component in edge computing setups, as they permit easy deployment and execution of applications and services on edge servers.

Edge virtual machines reduce latency for real-time tasks and meet required performance standards. Additionally, they guarantee reliability and seamless data protection. The convergence of AI/ML with edge virtual machines will further reduce response times.

Hybrid and Multicloud Environments

Modern hybrid and multicloud environments heavily rely on virtualization technology.

A hybrid cloud combines on-premise infrastructure (private cloud) with third-party service over the internet (public cloud).

Using virtual machines to abstract physical hardware enables the seamless movement of data and applications between both environments.

Increasing reliance on edge workloads and the rise of Internet of Things (IoT) technology will further reduce latency and improve reliability. As you’ve understood by now, this is a common theme.

Advancements in Virtualization Technology

You already know the direction virtualization technology is traversing, and there’s reason to be excited.

Organizations are finally recognizing the potential of virtual machines, and adoption numbers are skyrocketing. To put it into perspective, 66% of organizations experience increased agility after adopting VM technology, and 50% observe enhanced operational efficiency.

AI/ML and IoT technology will be pivotal to future iterations of virtual machines, and reduced latency and improved reliability will be targeted.

Virtual Machines: Transforming Modern Computing

Virtual machines enable efficient, cost-effective, and dynamic sharing of computing resources amongst workloads based in secure, isolated environments.

VMs are extensively used for development, testing, and debugging and play an instrumental role in modern IT infrastructure. From desktop environments to server farms, virtualization is revolutionizing the flexibility and efficiency of computing infrastructures.

Its diverse applications make it a must-have in most organizational tech stacks. Approximately 94% of organizations use cloud computing services like Amazon Web Services (AWS), Google Cloud, and Microsoft Azure.

AWS dominates the sphere with an impressive market share of 32%. Virtual machines are the backbone of cloud computing, so they’re at the heart of modern computing.

The best thing is that you don’t have to pay top bucks to enjoy the benefits of virtual machines; Oracle VM VirtualBox, for example, is available for free and is an excellent starting point.